On this page

- Results at a Glance

- The Problem: Why Ferry’s Code Generator Had To Change

- Why A Custom Generator Was The Right Call

- The Architecture: A Multi-Pass Code Generation Pipeline

- How Claude Code Accelerated The Team

- Documentation As Infrastructure: AGENTS.md, CLAUDE.md, And Scoped Context

- AI-Assisted Engineering: Our Workflow

- The Results

Betterment’s Flutter codebase relied on Ferry, a stream-based GraphQL client for Dart, backed by the gql Dart library for code generation. But Ferry’s generated code predated Dart 3 and couldn’t support sealed classes, exhaustive pattern matching, or switch expressions. Fragments, the backbone of any GraphQL schema, could fail silently at runtime, uncharacteristic behavior for Dart. Cache deserialization was tricky and could easily break. Polymorphic types couldn’t be handled idiomatically.

Betterment’s team had built compensating patterns to work around these limitations, but the workarounds carried a real cost: added maintenance burden, increased cognitive load for developers, and a GraphQL experience on Flutter that was significantly more constrained than what the team was accustomed to on other platforms. Rather than continue absorbing that overhead, the team decided it was time to fix the problem at the source.

Rather than migrate away from Ferry, VGV’s team, building on a long-running Flutter partnership with Betterment, wrote a new code generation layer from the ground up, using Claude Code as a core part of the development workflow. The result: a Dart 3-native GraphQL code generator with sealed classes for polymorphic type safety, custom serialization, and a multi-pass pipeline architecture that eliminated the root causes of v1’s limitations.

For Betterment, the impact went beyond the generated code itself. The new generator removed the compensating patterns that had accumulated around Ferry’s shortcomings, reducing maintenance burden in the Flutter codebase and cognitive load across the team’s broader GraphQL practice. With polymorphism and fragments working idiomatically, product teams could move faster, writing GraphQL code on Flutter the same way they did on other platforms, without workarounds slowing them down.

This is the story of how we built it, what we learned about agentic coding in practice, and what other engineering teams can take from the experience.

Results at a Glance

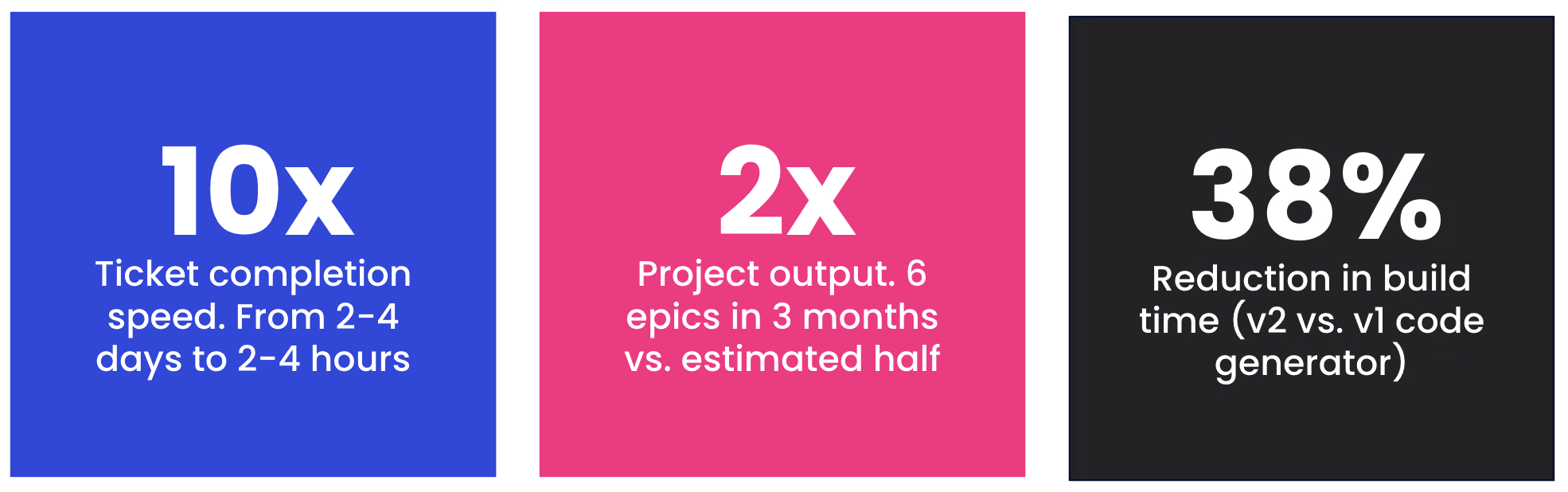

- 10x ticket velocity: Tasks that previously took 2-4 days completed in 2-4 hours

- 2x project output: 6 epics delivered in 3 months (rather than an estimated 6 months without Claude)

- 38% faster builds: v2 code generator build times vs. v1

- 4 critical Ferry code generation issues resolved: Deserialization, polymorphism, and fragment handling issues eliminated

- Generated file size increase: Only 0.7%, with simpler output and no

built_valuedependencies

The Problem: Why Ferry’s Code Generator Had To Change

What Was The Change Needed?

Ferry relied on gql, a library that predates Dart 3 and doesn’t support modern features like sealed classes for exhaustive pattern matching and switch expressions for type discrimination.

This created four critical issues:

- Type matching failures for interface fragments, making it impossible to use fragments on interface types.

- Inability to deserialize polymorphic fragments from the cache.

- Heavy dependency on the built_value serialization library, which failed to serialize JSON fields correctly. The generated code was also overly verbose, making testing difficult.

- No fragment reuse on polymorphic types, leading to widespread code duplication.

These weren’t problems unique to Betterment. Every team relying on gql for code generation faced the same limitations: Ferry’s code generator simply hadn’t kept pace with Dart 3. A partnership between Betterment, VGV, and Claude Code made it possible to move fast enough to fix the problem at the source, improving Betterment’s GraphQL practice while contributing improvements that benefit the broader Flutter community.

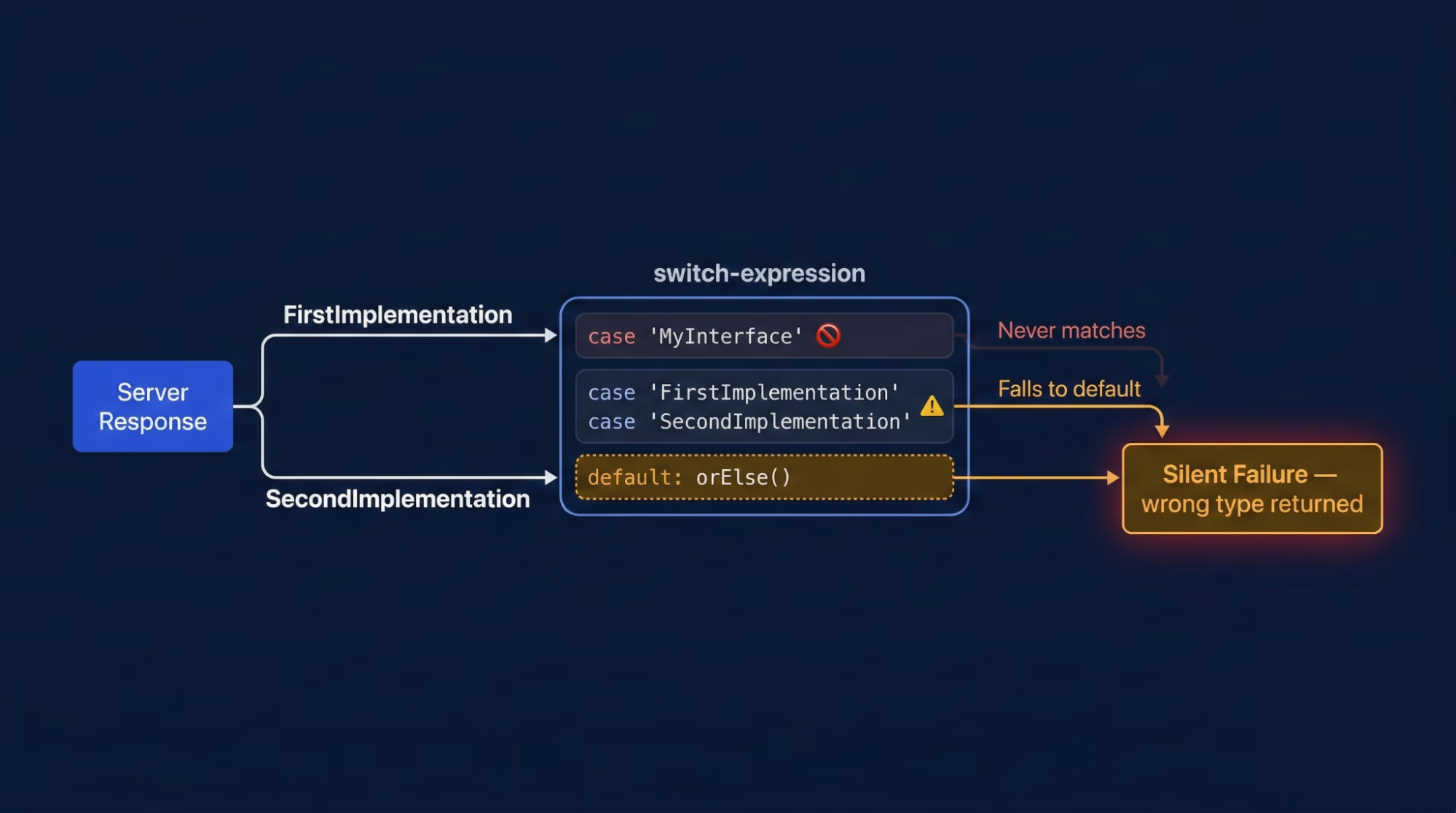

A Concrete Example: The Silent Casting Failure

The code generation work was pure Dart, not Flutter, but the issue manifests clearly in fragment deserialization. In GraphQL, you have interfaces and fragments. A fragment can target an interface, like this:

interface MyInterface {

// common fields

}

type FirstImplementation implements MyInterface {

// type specific fields

}

type SecondImplementation implements MyInterface {

// type specific fields

}And then a fragment on that interface:

fragment MyFragment on MyInterface {

// fragment fields selection

}The v1 code generator produced this:

switch (G__typename) {

// Server sends `FirstImplementation` or `SecondImplementation`,

// never `MyInterface`

case 'MyInterface':

return MyInterface(this);

case 'FirstImplementation':

return FirstImplementation(this);

// Always hits this for interface fragments

default:

return orElse();

}This created a silent failure: the fragment never matched the interface type, forcing developers to handle all interface types manually. This wasn’t a bug in the traditional sense, it was a design limitation of the v1 code generation. To compensate, Betterment’s engineers duplicated code, avoided idiomatic GraphQL polymorphism entirely, and added extra test coverage to protect against the gaps the generated code couldn’t catch.

Why A Custom Generator Was The Right Call

GraphQL generates models directly from schemas and operations, eliminating the need for manual model definitions. Generated models become the domain model directly, with no additional mapping layer. This makes the code generator critical to the entire application architecture.

Switching to a different GraphQL client would have required rewriting the integration across the entire codebase. Instead, the team built a custom code generator that produces sealed classes for polymorphic type safety, includes custom serializers, and removes heavy dependencies.

Forking and improving gql rather than replacing Ferry preserved the existing client layer while unlocking Dart 3-native code generation — cleaner output, faster builds, and a clear migration path for Betterment’s production codebase.

The Architecture: A Multi-Pass Code Generation Pipeline

How The Builders Work

SchemaBuilderV2, DataBuilderV2, ReqBuilderV2, and VarBuilderV2 function similarly to their v1 counterparts — each running in isolation via build_runner, with distinct responsibilities for visiting document nodes and generating request, variable, or data classes. The critical change lives in DataBuilderV2, which introduces multi-pass code generation.

Why Single-Pass Failed

The v1 generator used a single pass: it started at the document root, found an operation, and immediately resolved types and nullability. This single-pass approach created context blindness — a fragment used in Operation A was regenerated when it appeared in Operation B, causing duplication and unnecessary complexity.

The v2 Multi-Pass Pipeline

The v2 architecture introduces a multi-pass pipeline, splitting code generation into three distinct stages with SchemaVisitor, FragmentVisitor, and OperationVisitor:

- Step 1 (SchemaVisitor): Identifies all schema types - enums, inputs, scalars, objects, interfaces, and unions - then registers them in a SchemaRegistry available to subsequent steps.

- Step 2 (FragmentVisitor): Analyzes fragments in isolation, resolving their exact type before they are used in operations. Results are stored in a FragmentRegistry.

- Step 3 (OperationVisitor): Assembles everything using the registries from previous steps, identifies operations, and calls class builders to generate Dart classes.

Despite traversing nodes multiple times, the multi-pass approach has no measurable impact on build time. The real value is correctness: because the FragmentVisitor resolves each fragment’s type in isolation before any operation uses it, shared fragments produce a single, consistent type across the entire codebase.

In v1’s single-pass approach, the same fragment could produce subtly different generated types depending on which operation context it was resolved in, making true fragment reuse impossible.

Removing built_value: Why Thin Serialization Won

While built_value was once the standard for Dart serialization, it had several drawbacks:

- Heavy boilerplate

- Slow build times

- Failed deserialization of

Map<String, dynamic>, which breaks all GraphQL operations using the JSON type - Handling generics and polymorphism requires manual serializer registration for each type; omitting even one causes runtime crashes during deserialization

The team replaced this with custom serialization, adding fromJson and toJson methods to each generated class. This approach reduces complexity, works well with static methods and factory constructors, and avoids plugins and builders entirely. It eliminates built_value dependencies and improves both runtime and build performance.

Sealed Classes: Exhaustive Polymorphism For GraphQL

The previous version relied on built_value and manual type checking to handle polymorphism. This approach risked runtime errors: forgetting to handle any type variant could cause crashes or silent failures. Without exhaustiveness checking, the compiler couldn’t verify that all type variants were handled. Betterment’s end-to-end testing provided coverage against these runtime failures, but the feedback loop was slow — a missed type variant wouldn’t surface until tests ran, rather than at compile time. The team wanted errors caught in the editor, not in CI.

Dart 3’s sealed classes enable switch expressions that are exhaustive: the compiler ensures all subtypes are handled, preventing missed cases while allowing access to shared base fields. Each sealed hierarchy includes an unknown subclass that acts as a catch-all, so when new types are added to the schema, existing clients handle them gracefully instead of breaking. The result is cleaner, safer code with built-in forward compatibility that enables idiomatic polymorphic GraphQL patterns to benefit from Dart’s type safety.

Design Decisions Along The Way

The team debated whether individual unit tests or comprehensive integration tests would better cover functionality and edge cases. We also discussed how to separate responsibilities between visitors and class spec builders, and how to maximize code reuse across them. These weren’t major concerns because the architecture was well-defined; we encountered only minor issues during implementation.

How Claude Code Accelerated The Team

Ramping Up On An Unfamiliar Domain

The VGV team had prior, but not vast experience with code generation and build_runner, which made the project’s early phases a steep learning curve.

We asked Claude foundational questions before starting v2: “How does build_runner work? What are its entry points? How is the code structured? How do we invoke builders?” Within a few clarifications, we understood the key concepts: build_runner runs independently; factories in build.yaml specify input and output files; builders are isolated and communicate only through generated assets.

Claude was essential to understanding how gql works. This helped us own tickets independently: We could first analyze what was needed and where changes should go. Once we understood v1, we used Claude to walk through the v2 architecture documentation — the multi-pass pipeline, each visitor’s scope, and how to wire them together — which clarified the entire new design.

How Much Time Did Claude Code Save On Ramp-Up?

Understanding v1’s architecture and build_runner basics could have taken weeks; learning v2’s design might have taken another week or more. Based on similar projects, we would have spent the first month primarily learning rather than developing.

Claude compressed this ramp-up to just a few days, allowing the entire VGV team to start productive work immediately.

Documentation As Infrastructure: AGENTS.md, CLAUDE.md, And Scoped Context

The Story Behind

The Betterment team, with its deep expertise in Ferry and GraphQL, had already faced and resolved four critical production issues. They had meticulously diagnosed the root causes and explored every angle before starting any v2 development. The GraphQL specification served as their definitive guide. This led to the v2 architecture—a multi-pass pipeline, sealed classes, and thin serialization—which was explicitly designed against the spec with a clear end goal. Their issue write-ups were more than simple bug reports; they included root cause analyses, reproduction steps, and proposed solutions linked to the architectural documentation.

Furthermore, the documentation was not a static, initial draft. It was continuously refined and validated; they repeatedly used Claude Code against the documentation itself to ensure it was functional context for AI-assisted development. The documentation was considered complete and ready—embodying the “documentation as infrastructure” thesis—only when Claude could independently take a ticket, read the architecture docs, and generate correct, unambiguous code. This illustrates not just the need for good documentation, but what “good enough to drive an AI-assisted engagement” truly means and how to achieve it.

This entire process—from identifying problems and designing the architecture to rigorously battle-testing the documentation—is what enabled VGV’s high velocity.

The Documentation That Was Already In Place

The Betterment team had architecture and implementation documents in place when we joined, with major issues already catalogued. We updated these docs as we encountered problems and obstacles.

The project was comprised of six epics; the first three of which had complete ticket breakdowns when we joined. A structured ticket template helped Claude separate concepts and objectives clearly throughout the engagement. The final epic included more diverse tickets focused on refactoring and cleanup.

How AGENTS.md and CLAUDE.md guided Claude’s Behavior

The AGENTS.md file structure Betterment created was excellent. The root-level file provided concise guidance for Claude’s repository work, including specification references, guidance, and review checklists. The documentation was organized by architecture, design principles, implementation details, v1 issues, epics, and tickets.

Package-level AGENTS files and tickets referenced specific documentation indices tied to task scope. This let Claude work with focused scope and task-specific context, avoiding overwhelming global documentation. The documentation’s level of detail, description style, and topic-based structure all contributed to scoped, manageable context.

Tickets In The Repo: What Worked And What Didn’t

Including tickets in the repo let Claude reference requirements when working on a task. Internally, Betterment already uses the Atlassian MCP server to connect Claude directly to Jira — humans and agents pull context from the same source of truth. But at the time of this engagement, we weren’t logistically equipped to share that MCP setup across both organizations, which meant copying Jira tickets into the repository as a practical workaround. Jira was the official source of truth, but the repository sometimes fell out of sync.

Lesson learned: The ideal setup would have been connecting a Jira/Confluence MCP to Claude instances. This would let Claude fetch tickets and docs on demand, eliminating local documentation copies entirely — and MCP connections to external systems are exactly the kind of infrastructure investment that pays dividends across projects.

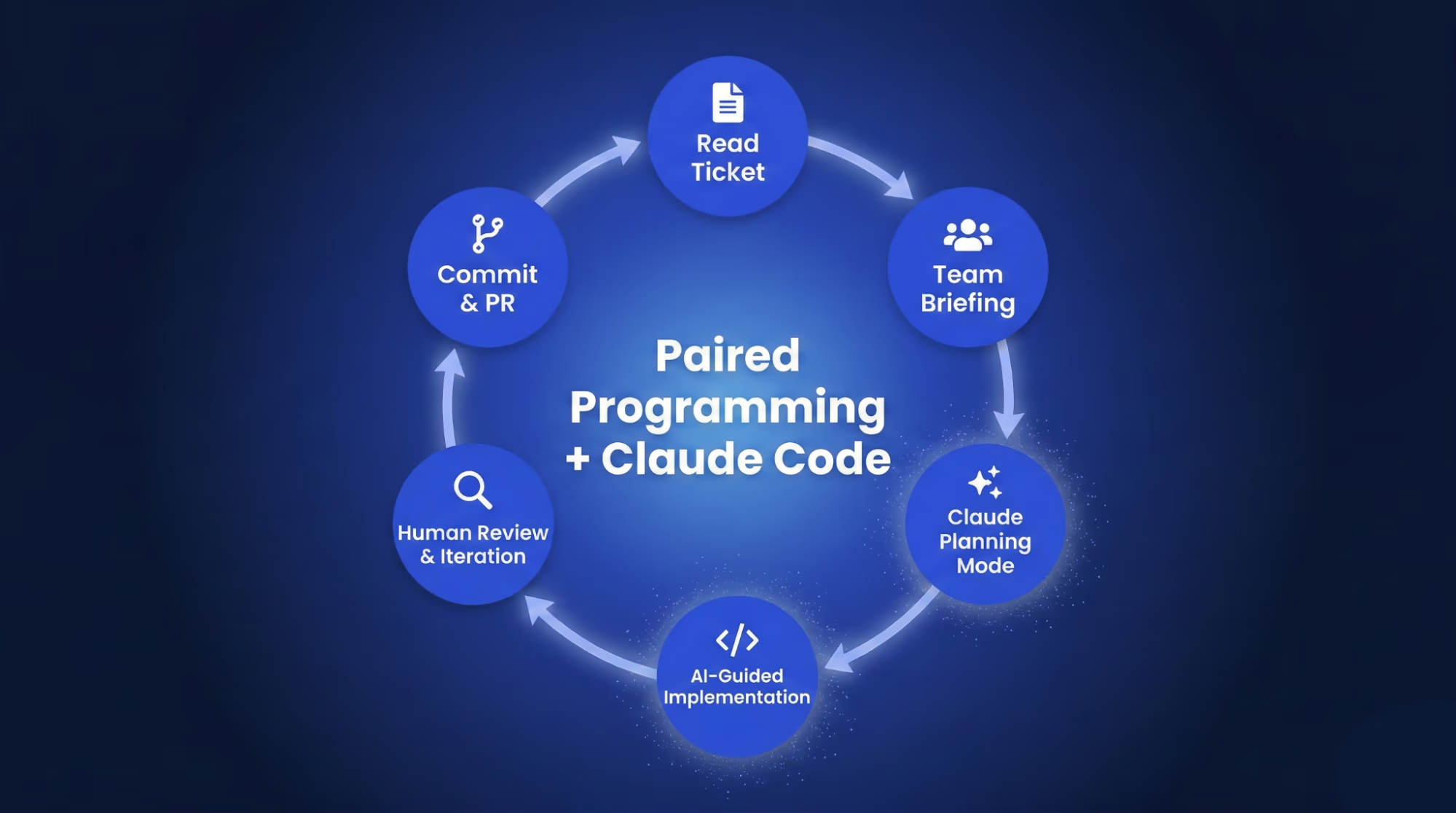

AI-Assisted Engineering: Our Workflow

We conducted team-wide pair programming sessions for collaborative learning. The ticket owner would explain the task to the team and identify key areas needing work: new files, model changes, and so on.

With a plan in place, we’d start with a simple prompt: “Claude, let’s work on ticket XYZ.” While basic, this prompt worked because Claude had full access to documentation and tickets. Claude would review the documentation and tickets, summarize them, and propose a detailed plan.

We’d review the plan and suggest refinements like reusing existing files or refactoring repeated methods. After several iterations (usually to fix missed imports), Claude would summarize what was done, which helped the team review the code and changes. We’d ask Claude to iterate if anything needed refinement.

We committed the code and wrote the PR description manually, using Claude’s summary to ensure we captured all changes. Then we’d move to the next ticket with a different team member leading. Claude also assisted with PR reviews, which complemented — but never replaced — human review. Because Claude had the same context as during development, its review comments helped reviewers orient quickly, but every PR was reviewed and approved by a human engineer before merging.

Ticket Velocity: Hours, Not Days

The early tickets in the first epic were the most complex as they were our introduction to the project. We weren’t yet confident in the architecture, and the learning curve was steep.

Without Claude, those tickets would have taken 2-4 days; with Claude and our workflow, we finished them in 2-4 hours. We rarely spent more than a day on a ticket unless the build failed or the Betterment team requested changes during review.

Test tasks were another example. The nature of the project required bootstrapping new golden tests for generated code throughout the process, as each epic expanded the generator’s capabilities, the team needed golden tests to protect against regressions going forward.

These tests involved many edge cases and repetitive GraphQL mocking, which Claude handled significantly faster than manual implementation. Claude also helped the team more quickly validate that the golden tests themselves were sound; that they were actually capturing the right generated output and would catch meaningful regressions, not just recording whatever the generator happened to produce.

Claude Code Pull-Request Reviews: What We Learned About AI As A Reviewer

Claude Code’s PR review capability was particularly effective. Since Claude had the same context as during development, it could review with deep, scoped knowledge. Claude’s reviews were fast, and combined with pair programming, reduced review bottlenecks significantly.

Interestingly, Claude sometimes suggested different improvements during review than during development, even with identical context. We’d think, “Why didn’t Claude catch this during implementation?” But AI responses aren’t deterministic — the same input can produce different outputs. Think of Claude as two different team members: one helping with development, another with review.

Even if Claude suggests different things at different times, you’ll reach the same outcome if your context is clear. Understanding this removes the temptation to “fix” the inconsistency. Even humans reviewing their own code weeks later notice things they missed before.

The biggest win: Claude Code reviews eliminated time-zone bottlenecks that could have slowed down our distributed team. Because Claude’s review pass caught structural issues, style inconsistencies, and missed edge cases before human reviewers ever opened the PR, engineers could focus their review time on architectural judgment and domain-specific correctness rather than mechanical concerns. Once we trusted the process, we let Claude approve and merge PRs in selected repositories (while keeping human in the loop review where required by our risk management policies), enabling true asynchronous continuous integration. If something broke, we’d file a follow-up ticket instead of blocking developers in different time zones waiting for feedback.

Minimizing Hallucinations Through Documentation

Hallucinations increase with ambiguity: unclear documentation, vague prompts, and Claude identifying patterns it assumes apply more broadly than they do. Eliminating ambiguity — through deterministic documentation and precise prompts — significantly reduces hallucinations. By documentation, we mean architecture guides, ticket descriptions, prompts, and any contextual information.

Because tickets and architecture were well-documented, Claude rarely produced confidently incorrect code, though small, subtle bugs and tricky edge cases did slip through Claude’s initial implementations at times. Once developers identified such bugs, we’d ask Claude about the specific edge case with an example, and Claude could usually spot the issue and propose a fix.

Onboarding: “Any Doubt, Ask Claude”

If a new engineer joined the project today, their first day would look similar to my own first week: asking Claude everything. Too much documentation can overwhelm new engineers, leaving them unsure where to begin.

Claude helps by letting you ask specific questions — “Where’s the code entry point?” “What should I read first?” “Where are data classes generated?” — rather than navigating documentation yourself. This helps new engineers learn the codebase and adapt to AI-assisted development.

The Results

V2 Code Generator Capabilities

The v2 generator didn’t add new capabilities but significantly improved polymorphism handling. It handles the same operations as v1 — queries, mutations, and subscriptions — plus regular fragments, interface fragments, inline fragments, nested fragments, scalar types (String, Int, Float, Boolean, ID), custom scalars, and enums.

Benchmarks

Benchmarks show v2 build times decreased by 38%, generated file size increased only 0.7%, and the output is simpler without built_value dependencies. All four critical issues — deserialization, polymorphism, and fragment handling — were completely resolved.

Overall Productivity

Rough estimates: tasks that would have taken 2-4 days completed in 2-4 hours with Claude; each epic took about 2 weeks. We completed 6 epics in 3 months, with demos at each sprint’s end. Without Claude, we estimate we’d have delivered roughly half the work. These aren’t exact figures, but they’re indicative.