In February 2026, I sat in the Trackhouse Racing pit box at Daytona watching a data processing pipeline we’d been building fall further behind with every lap. The data arrived in real time. Our processing didn’t. Results showed up 16 to 24 hours after the checkered flag.

Three weeks later, we had a completely rewritten pipeline in Rust, a language I’d never touched, processing the same workload in real time during the race. This weekend at Darlington, it runs live.

This is the story of how we got there with AI, and why the hardest part wasn’t writing the code.

The Problem

Trackhouse Racing needed complex, computationally intensive processing of NASCAR telemetry data in real time, during the race.

The scale is hard to overstate. 22,000+ messages per second across dozens of data points, feeding a processing pipeline that needs to run 50 times per second across 36 cars. The results need to go to three places at once: LiveKit for real-time visualization in Flutter apps, Azure Blob Storage for analytics, and Databricks for the data science team. And it all needs to run for 3.5-hour races without losing data.

Our Python prototype got the processing right. The math was correct. The data arrived on time. But Python’s computational overhead meant each processing tick took more than 200ms at a data rate that demanded sub-20ms. Every tick that ran long pushed the next one further behind. Over a 3.5-hour race, the backlog snowballed. Results that should have been available in milliseconds took days.

Everything worked. It just wasn’t fast enough. We needed orders of magnitude more performance to keep pace with the data.

Why Rust

Rust kept bouncing around in my head as an alternative. Fast, popular for server-side work, increasingly the go-to choice for teams that needed serious performance. It seemed like it could be the right fit.

The catch: I’d never written a line of it. And with NASCAR racing every week, every week without a reliable pipeline was another race where the team didn’t have the real-time data they needed to improve on-track performance. We needed to move fast without cutting corners.

At VGV, we’ve been investing heavily in how AI tooling fits into our engineering practice. Not as a shortcut, but as an accelerator grounded in taste and trust. Taste to know what the right architecture for the experience looks like. Trust to make sure it actually works in production. This was exactly the kind of challenge that approach was built for. I would use Claude Code, Anthropic’s AI coding assistant, as my primary implementation partner. I would drive the architecture, make the decisions, own the outcome. Claude would write the Rust.

What AI-Assisted Engineering Actually Looks Like

The popular narrative around AI coding tools is simple. Describe what you want, get code back, ship it. That narrative is wrong. Or at least, it leaves out the part that actually matters: the gap between AI-generated output and production-grade software.

Here’s what actually happened over three weeks.

Week 1: Understanding the Problem and Planning the Migration

Before writing any Rust, we needed to understand what we were building and why. The product goal was straightforward. Give the Trackhouse team real-time access to processed telemetry during the race, not hours after. That meant the pipeline had to keep pace with a 50Hz data stream across 36 cars, publish to three different outputs simultaneously, and run stable for 3.5 hours without manual intervention.

I started by pointing Claude at the existing Python codebase. Not to rewrite it line by line, but to deeply analyze it. Claude produced detailed assessments of the architecture, identified where the performance bottlenecks lived, mapped out every data transformation and output path, and generated migration plans that laid out what could port directly and what needed to be rethought from scratch. These reports became the blueprint for the entire project.

From there we designed the Rust workspace together. But the key decisions weren’t about code. They were about architecture. How do we handle backpressure when Azure uploads are slow but LiveKit needs to maintain a smooth framerate? What happens when one output falls behind? How do we guarantee data durability without stalling the real-time stream?

These aren’t questions an AI answers well on its own. They require understanding operational constraints. NASCAR’s data stream has specific timing characteristics. A single dropped frame matters less than a pipeline stall. I made those calls. Claude implemented them.

Week 2: Stress Testing and Hitting Walls

The first Rust pipeline was based directly on the Python architecture. A monolithic process that ingested data, ran the processing, and published to all outputs in one place. The performance gains were immediate. 200-400ms per tick in Python down to 2-4ms in Rust. A massive improvement. But the system started showing cracks under real race conditions.

We ran replays of full races and watched everything. Memory usage, disk throughput, message latency, consumer lag. Monitoring every 10 minutes, just observing. Two problems emerged.

The first was speed. The core processing could keep pace with the data stream, but the outputs couldn’t always keep up. Azure Blob uploads take time. Databricks publishes over gRPC with acknowledgment latency. When those slowed down, they back-pressured the processing, and the whole pipeline stalled.

The second was data loss. We needed durable storage of every tick for analytics and data science. Losing data wasn’t acceptable. But the act of durably storing everything was exactly what was dragging the system down. The Databricks publisher would fall behind, its memory would grow as it buffered unsent records, and eventually it would crash and take the entire pipeline down with it, including the real-time stream the team was actually watching.

This was the core tension and the critical insight that shaped everything that followed. The processing was fast enough. The real-time stream was fast enough. But we couldn’t guarantee durable capture of every data point without risking the system that was supposed to deliver it. In a monolithic architecture, the requirement to store everything conflicted with the requirement to never stall.

Week 3: Re-Architecture and Rapid Iteration

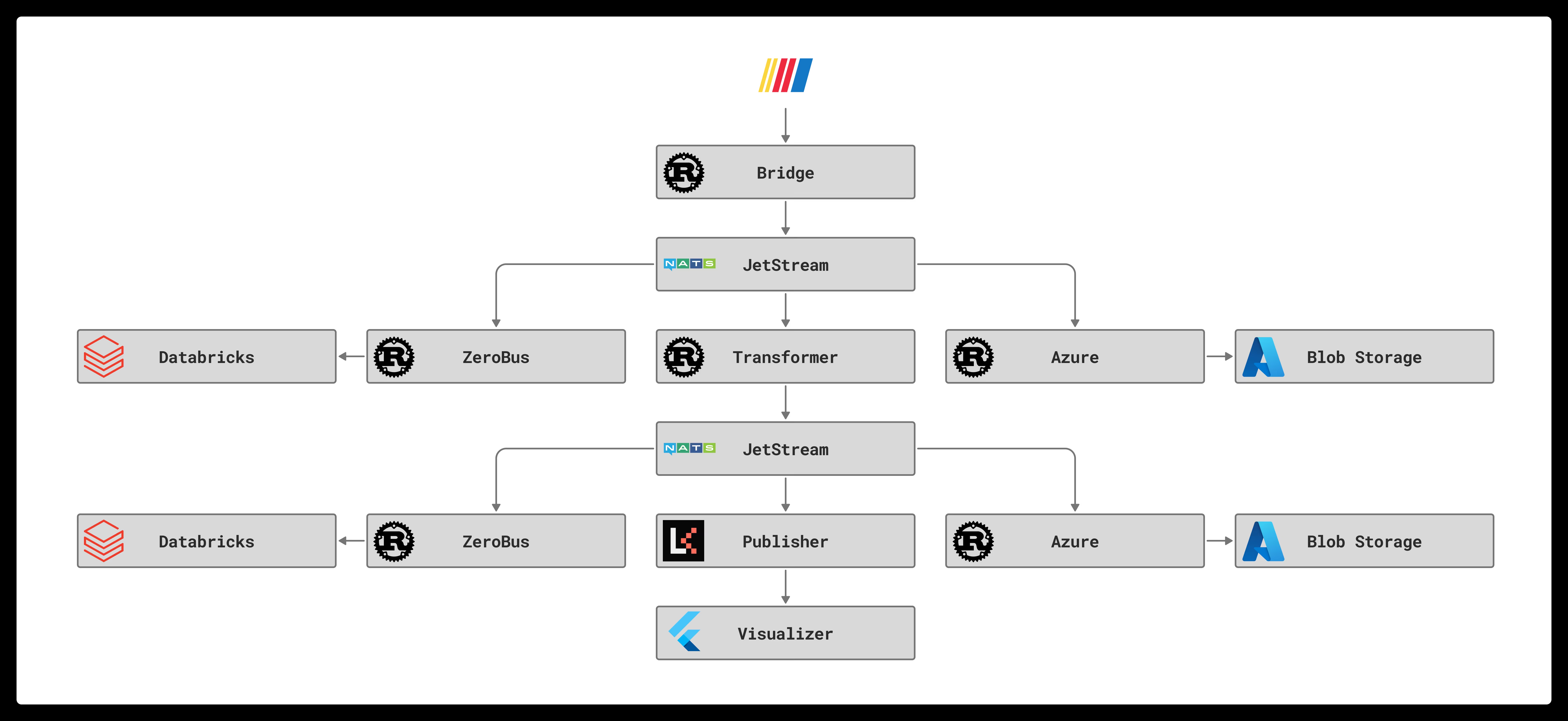

Week 3 was about tearing apart the monolith and rebuilding it as independent pieces. We designed a NATS JetStream fan-out architecture. 9 independent binaries, each responsible for exactly one thing. The processing engine reads from one stream and writes to another. LiveKit, Azure, and Databricks each have their own consumer binary. If one crashes, the others keep running.

This design came from a conversation, not a prompt. I described what we’d observed during stress testing, and we iterated on solutions until we landed on the fan-out model. Claude wrote the integration code, the consumer reconnection logic, the compression and retention configuration. I reviewed every architectural choice.

What followed was a rapid cycle of deploy, test, observe, iterate. We’d run a full race replay, monitor it, find an issue, fix it, redeploy, and test again. In a single 48-hour stretch we shipped six patch releases, each one informed by what we’d observed in the previous test.

This is where Claude’s value went beyond writing code. Every issue we found required deep analysis. Memory growing in a third-party SDK. A consumer silently dying without logging an error. A visual artifact that only appeared at specific points on the track. These weren’t problems you solve by reading the docs. Each one required tracing through multiple layers of the system, testing hypotheses, ruling out dead ends, crunching hundreds of millions of telemetry records to find patterns, and sometimes discovering that the root cause was something completely different from what the symptoms suggested.

A person doing that analysis manually would take days per issue. Claude could do it in minutes, try multiple pathways simultaneously, and present findings I could evaluate and act on. That speed of analysis is what made it possible to ship those releases in two days instead of six weeks.

The fixes ranged from one-line changes to completely restructuring how data was packaged and sent. One issue was caused by a third-party SDK that couldn’t handle large payloads efficiently. The solution was breaking data into much smaller chunks, which dropped memory usage from gigabytes to under 100MB. Another bug had been present since the original Python codebase and nobody had noticed. The ability to rapidly and deeply analyze complex problems, weigh solutions, and iterate is what turned a fragile prototype into a production system.

The Pipeline Today

The data flows through six stages, each running as its own independent binary. The ingest bridge connects to NASCAR’s NATS feed and filters it into a local JetStream server. The processing engine picks up every tick and runs the computation, producing output for 36 cars across thousands of data points. From there, the LiveKit publisher packs results into a compact binary frame and pushes them via WebRTC at 50Hz to a multi-platform Flutter ecosystem of web, mobile, and desktop apps sharing a common real-time transport layer. The Azure Blob writer saves Parquet files for analytics. The Databricks publisher streams records in real time via gRPC.

Every stage is independent. The processing engine handles each tick in 0.04-0.08 milliseconds, down from 200-400ms in Python. The pipeline ingests 22,000+ messages per second and ran a full 3.5-hour race processing over 227 million messages with zero restarts and minimal drift between the live data and the slowest consumer. If the Databricks publisher falls behind, it catches up without affecting the real-time LiveKit stream. If any process crashes, systemd restarts it and the durable consumer resumes where it left off.

What I Learned About Working With AI

It’s not about the code generation

The most valuable thing Claude did wasn’t writing Rust. It was being a collaborator who could hold the entire system in context. The message formats, the processing math, the upload retry logic, the binary frame protocol. It helped me reason about how changes in one place affected everything else.

When I said “the Databricks consumer is crashing after 60 seconds,” Claude didn’t just suggest increasing the memory limit. We traced the growth through the SDK’s buffer, measured the payload sizes, calculated what the theoretical maximum should have been, found the gap between theory and reality, and identified that the data packaging was the real problem. The fix wasn’t tuning a config. It was rethinking how we structured the data.

The human still drives

Every architectural decision was a human decision informed by operational experience. I know what happens at a NASCAR pit box when the network drops. I know that losing 10 minutes of durable uploads is worse than a 2-second visual stall. Claude doesn’t have that context unless I provide it.

The AI accelerated the implementation of those decisions by orders of magnitude. But it didn’t make them.

Testing is non-negotiable

We ran the pipeline through a full 3.5-hour race replay before declaring it ready. We monitored memory, disk, CPU, message throughput, and consumer lag every 10 minutes. We found and fixed 6 bugs in production-like conditions over two days of testing.

AI makes it tempting to move fast. The discipline is in not shipping until you’ve validated at scale.

Unfamiliar languages aren’t the barrier they used to be

I went from zero Rust experience to a production pipeline processing 22,000 messages per second in three weeks. Not because Rust is easy, but because the AI handled the syntax and idioms while I focused on architecture and correctness.

I still don’t write Rust fluently by hand. I don’t need to. I understand the system because I’ve read and reviewed thousands of lines of it. The AI wrote the first draft. I own what shipped.

The Bigger Picture

This project isn’t a story about AI replacing engineers. It’s a story about what happens when you combine AI speed with the taste to make the right decisions and the trust to ensure they hold up under real conditions.

Three weeks with an unfamiliar language. A pipeline that processes millions of data points in real time during a NASCAR race.

That’s not magic. That’s taste and trust, accelerated by a new kind of tool.

The pipeline runs live at Darlington this weekend. The team will have real-time data during the race for the first time. That’s what matters.

Very Good Ventures helps teams build production-grade software at AI speed, with the taste to know what’s right and the trust to make sure it works. If you’re interested in how this approach could accelerate your team, reach out.