At Very Good Ventures, we kept running into the same gap with AI coding assistants: the tools were great at generating code, but they didn’t know how we work. Our teams follow a consistent set of engineering practices — clean architecture, comprehensive testing, YAGNI, and clear layer separation. These practices scale. But no AI tool understood them.

So we built VGV Wingspan — a Claude Code plugin that encodes our engineering workflow as AI-driven skills and specialized agents. Not as a replacement for developers, but as a collaborator that understands the full lifecycle: from exploring an idea to shipping a pull request.

The Problem: AI Is Fast, But Fast Isn’t Enough

AI coding assistants can generate code quickly. But speed without structure creates problems:

- No discovery phase. Jumping straight to code skips the “what are we actually building?” conversation.

- No plan. Generated code often addresses the wrong problem — or the right one in a way that breaks existing architecture.

- No quality gate. Without review, AI-generated code can introduce subtle violations — wrong state management patterns, missing tests, broken layer boundaries.

We wanted a tool that made AI follow the same workflow our engineers follow: explore the problem, plan the approach, build with discipline, and review before shipping.

Tech-Agnostic by Design

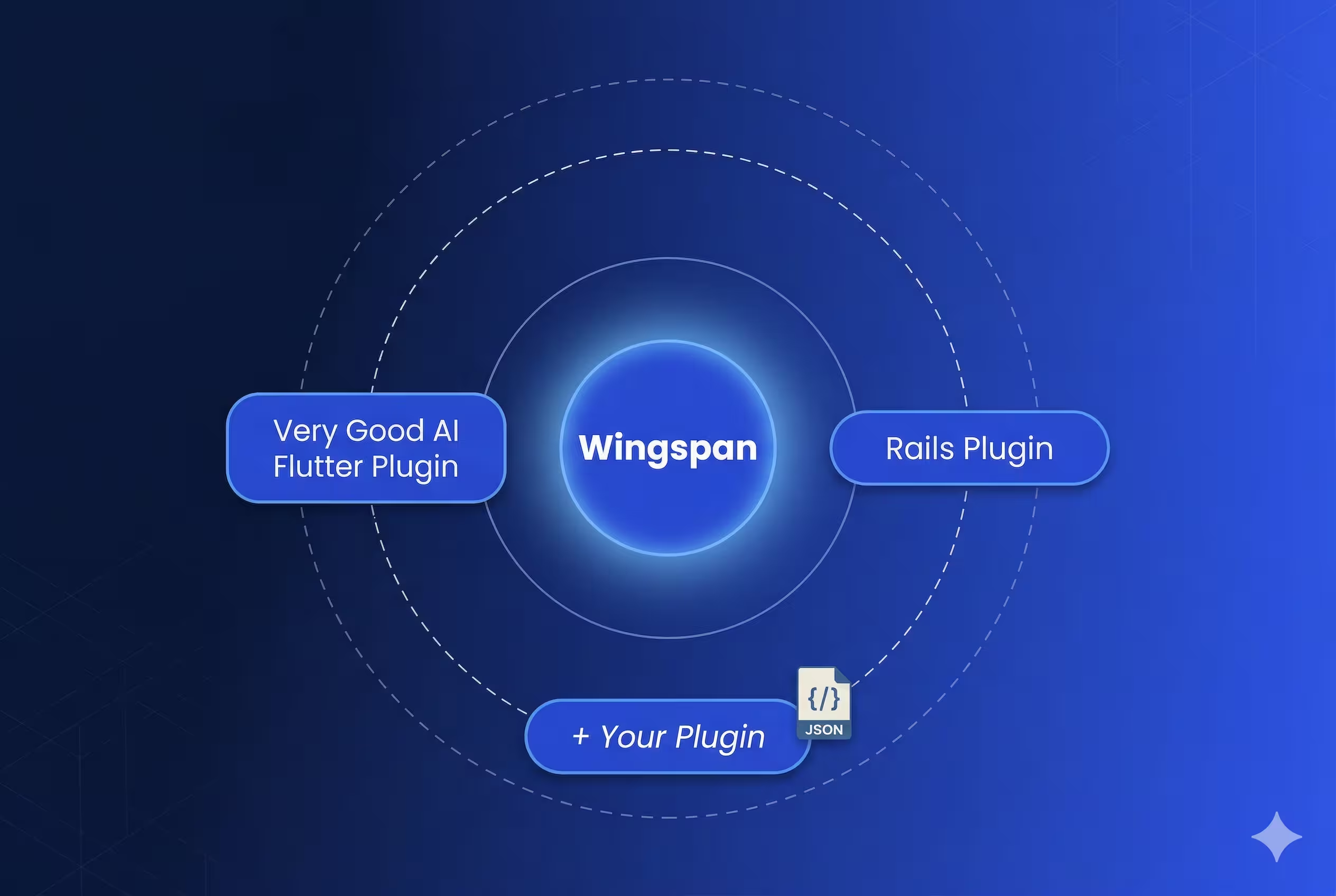

Early versions of Wingspan were Flutter-specific. We had Flutter linting rules, Dart formatting hooks, and BLoC-specific review logic baked in. It worked. But only for Flutter projects.

We made a deliberate decision to separate concerns: Wingspan handles the workflow (brainstorm, plan, build, review), and companion plugins handle technology-specific conventions (linting, formatting, scaffolding, and framework patterns).

This means Wingspan works with any stack. The engineering principles it enforces — clean architecture, comprehensive testing, YAGNI, and clear layer separation — are universal. The Flutter-specific rules moved into the Very Good AI Flutter Plugin, which Wingspan automatically recommends when it detects a Flutter project.

Wingspan also integrates MCP servers that extend its capabilities across the workflow. Figma connects design assets directly to the development process, so brainstorm and plan phases can reference real designs instead of verbal descriptions. Context7 fetches up-to-date documentation for libraries and frameworks, ensuring that plans and implementations use current APIs rather than relying on stale training data.

The Recommendation System

This design decision is worth pausing on. Detection isn’t limited to scanning existing project files — Wingspan also picks up on the technology specified during brainstorm or plan phases. Start a brainstorm about a new Flutter app on an empty directory, and Wingspan recommends the Flutter companion plugin before any code exists. It recommends silently, just once per session.

The system is entirely extensible without code changes — adding support for a new ecosystem is just a configuration file and a hook script. No redeployment required.

Markdown-First Architecture for Skills and Agents

One of the earliest decisions — and one we never regretted — was making everything markdown.

Skills are markdown files with YAML frontmatter. Agents are markdown files with structured prompts. Templates are markdown. Plans, brainstorms, reviews, and debriefs — all markdown.

Why markdown over YAML, JSON, or TOML as the primary format? Markdown is the one format that is simultaneously human-readable, version-controllable, CI-parseable, and self-documenting. Every other option optimizes for machines at the expense of contributors.

This has practical consequences:

- Version control works. Every change to a skill or agent is a diff you can review in a PR.

- No special tooling. Anyone can read, edit, and understand a skill without learning a DSL or framework.

- CI validates them. Our pipeline runs markdown lint, spell check, and structural validation on every PR.

- They’re self-documenting. The skill file is the documentation.

Managing Context Windows Between AI Phases

Large language models degrade as context fills up. Early reasoning gets pushed out, instructions blur, and the model starts making mistakes. We saw this firsthand — a brainstorm that ran long would produce a worse plan. A plan review that loaded too much code would miss architectural issues.

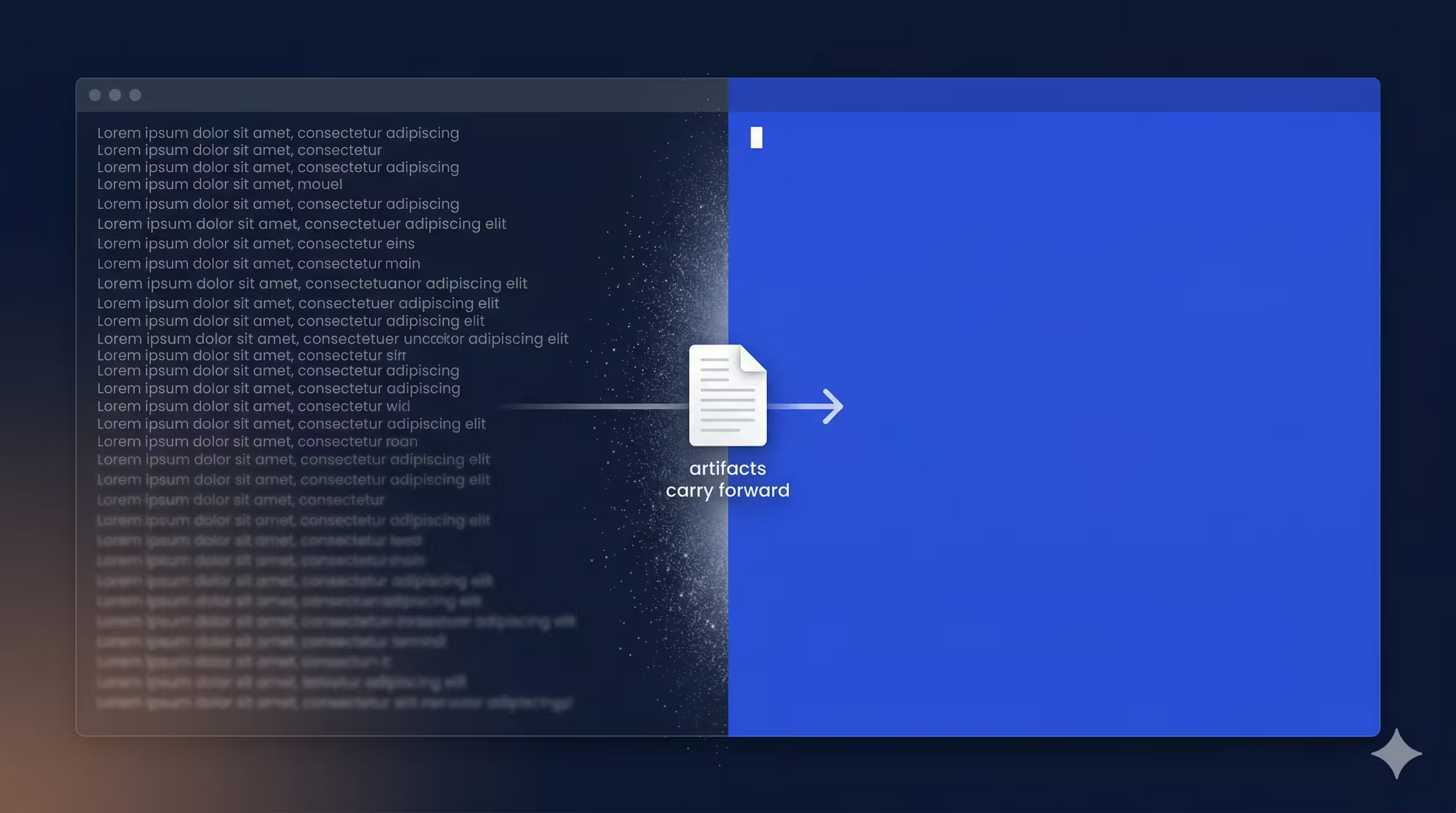

Our solution: clear context between phases. Every transition point offers a “Clear context and [next step]” option. Selecting it invokes the next skill with a fresh context window. The previous phase’s output is already saved to docs/, so nothing is lost — but the model starts with a clean slate.

Context management also matters within phases. When agents report back during the review phase, full verbose output can consume thousands of tokens and crowd out the reasoning space the orchestrator needs. We learned to have agents return concise summaries rather than exhaustive reports — the details are written to files, but the context window only gets what’s needed to make decisions.

This pattern was counterintuitive at first. Why throw away context? Because the artifacts carry the decisions forward, and fresh reasoning produces better results than exhausted reasoning.

14 Skills, 10 Agents, 80 Commits

Wingspan took shape over eight weeks, from February to April 2026. The core workflow loop — brainstorm, plan, build, and review — landed in the first four weeks. The remaining time went to the tech-agnostic transformation, the plugin recommendation system, and the skills we kept reaching for: /hotfix for urgent production fixes (skips brainstorm and plan, goes straight to build-and-review), /review for on-demand quality checks against any branch, /debrief for post-incident analysis, and utility skills like /commit and /create-branch for the git operations that bookend every task.

The final count: 14 skills, 10 agents, a hook-based plugin recommendation system, and a CI pipeline that validates every change. Eight weeks from first commit to marketplace release.

What We Learned

1. Structure beats intelligence

A mediocre plan executed with discipline beats a brilliant improvisation. By forcing the AI through brainstorm-then-plan-then-build-then-review, we get consistently better results than letting it freestyle.

2. Multi-agent code review catches what single-pass review misses

Five agents reviewing from different angles — standards, simplicity, testing, architecture, and readiness — catch more issues than a single comprehensive review. The disagreements between them often surface the most interesting problems.

3. YAGNI applies to AI tools too

Early versions of Wingspan had features we never used: complex state machines for skill transitions, elaborate retry logic, and configurable review thresholds. We removed all of it. The simplest version that works is the right version.

4. Context is precious — spend it wisely

Every token of context that goes to stale information is a token not available for fresh reasoning. The context-clearing pattern was our single biggest quality improvement.

5. Tech-agnostic is harder but worth it

It was tempting to keep Flutter-specific logic in Wingspan — it worked and our team uses Flutter daily. But separating concerns made Wingspan useful for all our projects and opened the door for companion plugins from the community.

The Four-Phase Workflow

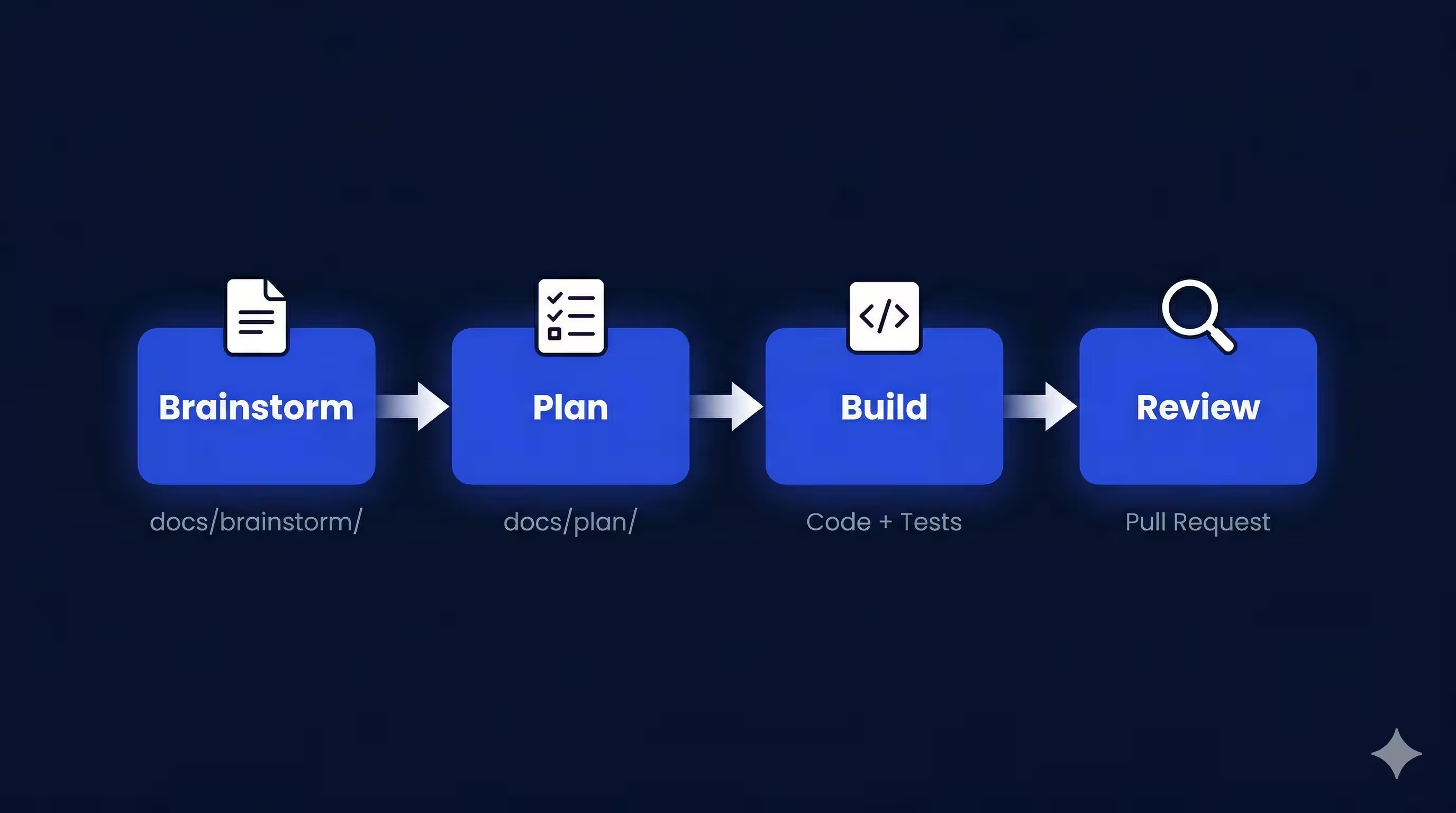

Wingspan is structured around four phases, each producing artifacts that inform the next:

1. Brainstorm (/brainstorm)

This is the discovery phase. You describe a problem or idea, and Wingspan opens a collaborative dialogue to explore requirements, constraints, and approaches. Instead of rushing to code, it asks questions, surfaces trade-offs, and clarifies the actual problem. The boundary exists because the best implementation starts with a shared understanding of what you’re building and why — something AI tools routinely skip.

The output is a brainstorm document saved to docs/brainstorm/. This document captures the “why” and the “what” before anyone writes a line of code.

2. Plan (/plan)

Once the brainstorm is solid, Wingspan transforms it into an actionable implementation plan. For existing projects, this phase reviews the codebase, identifies relevant patterns and conventions, and produces a step-by-step plan with clear acceptance criteria. For greenfield projects, it researches the target stack and establishes the conventions the implementation will follow. Either way, the plan phase exists as a separate step because we’ve seen AI tools produce perfectly reasonable code that solves the wrong problem — or solves the right problem in a way that ignores the project’s existing conventions.

Plans come in three sizes — minimal, standard, and extensive — matched to the complexity of the task. A simple bug fix gets a minimal plan. A new feature spanning multiple layers gets an extensive one with phases, risks, and dependencies.

The plan is saved to docs/plan/, ready for the build phase.

3. Build (/build)

This is where code gets written. Wingspan executes the plan and writes tests alongside the implementation, producing a working branch ready for review. This is also where companion plugins become active — if the plan specifies Flutter, the Flutter plugin’s scaffolding, linting, and framework-specific conventions guide the implementation.

4. Review

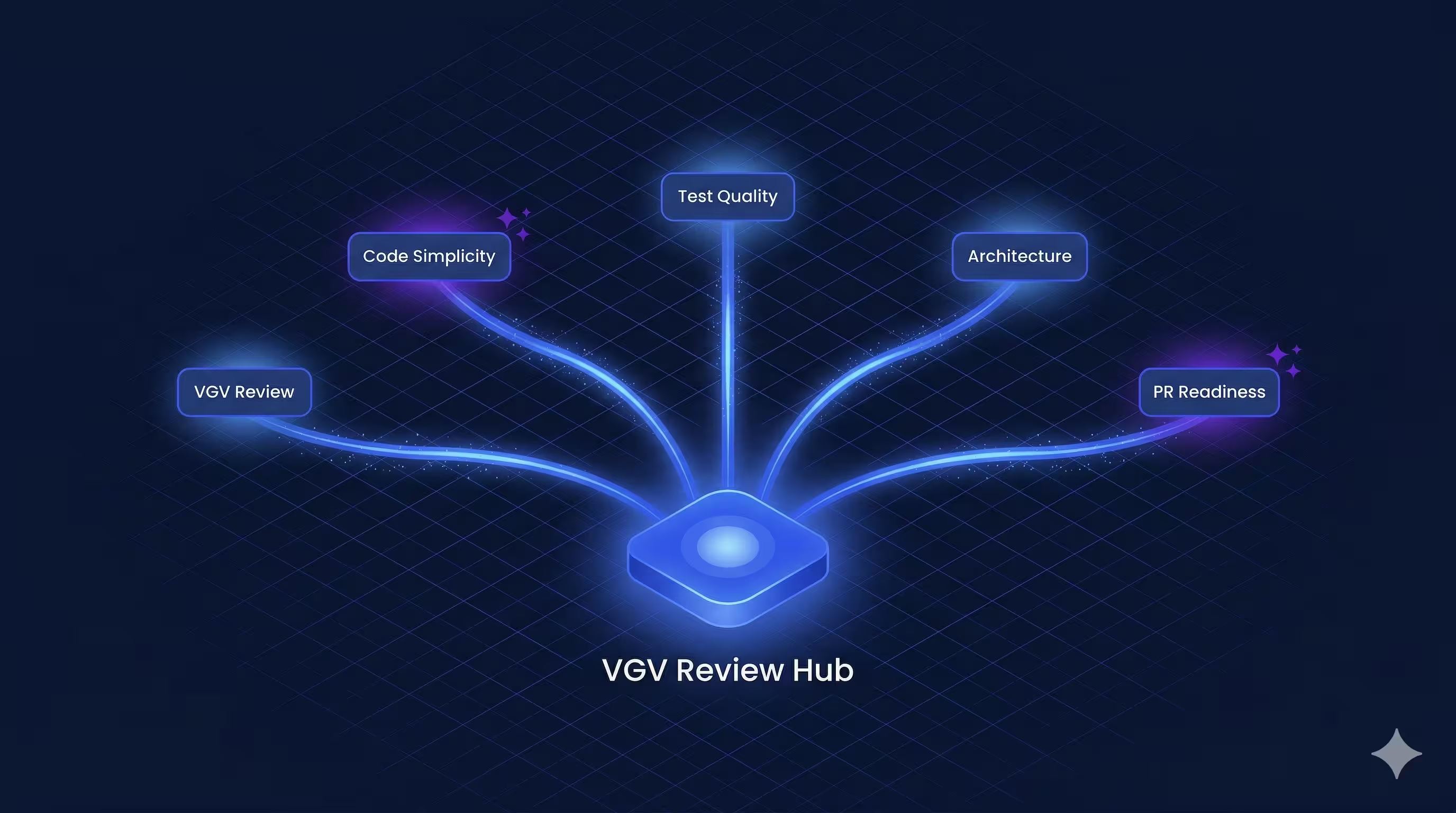

Before opening a pull request, Wingspan runs a multi-agent quality review. Five specialized agents review the work in parallel:

- VGV Review Agent — Checks adherence to Very Good Ventures engineering standards

- Code Simplicity Agent — Flags YAGNI violations and premature abstractions

- Test Quality Agent — Verifies every testable unit has meaningful tests

- Architecture Agent — Validates layer separation and dependency direction

- PR Readiness Agent — Final pre-merge checks (CI compatibility, merge conflicts)

Issues are categorized as critical (must fix), important (should fix), or suggestions (noted in the PR). The review phase doesn’t just check for bugs — it enforces the engineering practices that let teams scale.

Getting Started

Wingspan is our attempt to answer a question every engineering team using AI tools eventually faces: how do you get the AI to work the way your team works? The answer, for us, was to encode the workflow — brainstorm, plan, build, review — and let the AI execute it. If that pattern is useful for your team, Wingspan is open source and available on the Claude Code marketplace.

/plugin marketplace add VeryGoodOpenSource/very_good_claude_code_marketplace

/plugin install vgv-wingspan@very_good_claude_code_marketplaceStart with /brainstorm and see where it takes you.