We’re Heading Back to Las Vegas

Very Good Ventures (VGV) is proud to return to Google Cloud Next ‘26, on April 22–24 at Mandalay Bay Convention Center. This year, we’re showing up with something that’s been years in the making—and we think it changes everything about how digital products are built and experienced.

Come find us in the Agentic Mobile & Web demo zone, where we’ll be running live demos of Flutter’s GenUI SDK: the technology that makes interfaces adapt, in real time, to who’s using them.

The Next Era of Multi-platform is Generative

For the past several years, the conversation around multi-platform development has centered on one big idea: build once, deploy everywhere. At VGV, we’ve lived that idea—from theme park kiosks and sports apps to enterprise booking tools and financial services dashboards, and we’ve seen firsthand how much it changes what’s possible for product teams.

But the next frontier isn’t just about running on every device. It’s about adapting to every user.

That’s the shift that Google’s GenUI SDK for Flutter makes possible. Instead of building fixed screens that every user sees the same way, GenUI dynamically assembles interfaces at runtime—selecting brand-safe visual components like cards, sliders, dropdowns, and maps based on the specific user’s intent, context, and behavior. The interface becomes a living thing. It responds. It anticipates. It guides.

This isn’t a prototype. At GCN ‘26, you can see it running live!

What to Expect at our Demo

You’ll find the VGV team in the Agentic Mobile & Web demo zone across all three days of the event. Here’s what you’ll see:

Flutter’s GenUI SDK in action. Watch a single codebase render adaptive, generative interfaces across mobile & web, each experience personalized to the user in real time.

Financial services tailored to your situation: Our demo walks every individual through a series of questions to understand how it would work for your product.

The A2UI protocol at work. Under the hood, the LLM (Gemini) never “paints” pixels or generates raw code. Instead, it acts as an orchestrator, selecting components from a curated, brand-safe catalog that are formally assembled and rendered by the Flutter GenUI SDK. The result: AI-generated UI that is predictable, on-brand, and compliant.

A conversation about your product. Whether you’re a Flutter team already looking to integrate GenUI, or a product leader on a React or native stack curious whether this applies to you—it does, and we’d love to talk through your specific situation. Bring your questions, your constraints, and your platform challenges.

Why This Moment Matters

At last year’s Google Cloud Next, one theme dominated every conversation: AI is no longer a talking point—it’s moving fast and breaking real ground. Generative AI showed real impact across document processing, real-time agentic interfaces, and developer tooling.

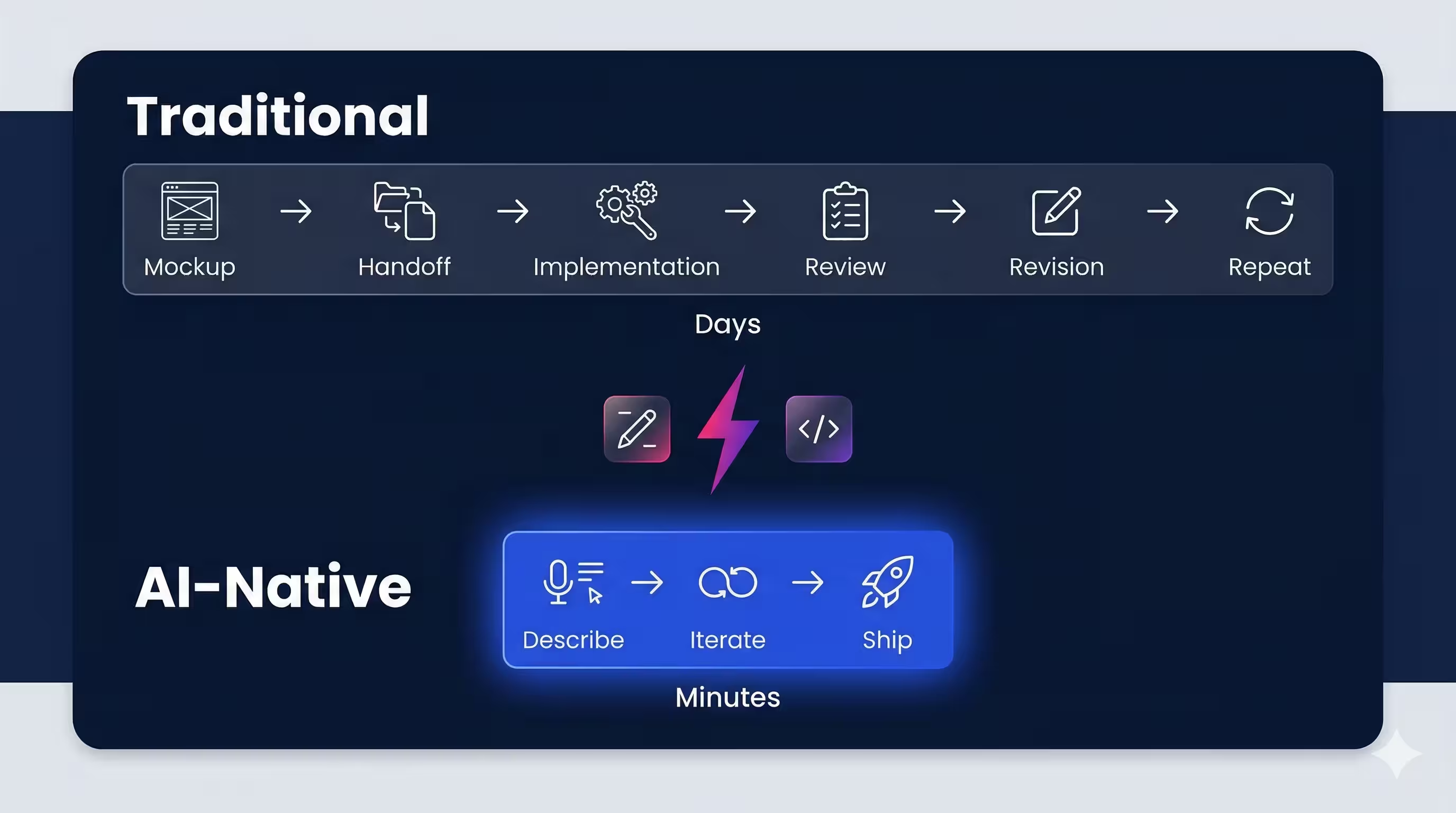

But there was a gap. The most powerful AI systems were still largely text-based. You’d type a prompt, get a response in text, and be left to manually figure out your next step. That interaction model works. It’s just not the most human-friendly one.

GenUI closes that gap. It’s the shift from bots that talk to bots that show, adapt, and respond visually. Rather than asking users to endlessly refine text prompts, the agent predicts what’s needed next and generates the right visual components to collect it — making task completion faster, more intuitive, and more on-brand.

At VGV, we’ve always found the most interesting work at the edges — before the playbook exists. We were building Flutter apps in production when the framework was still finding its footing. GenUI is that moment again. We’re not here with a polished, off-the-shelf answer. We’re experimenting, iterating, and making something genuinely new more viable — and we want to bring you along for it.

At VGV, we’ve been building in this space in partnership with Google, and we believe Gen UI will define the next era of UX for intelligent digital products.

A Demo Built for Google Cloud Next

Our GCN ‘26 demo isn’t an off-the-shelf walkthrough. We’ve built a purpose-made GenUI experience that shows what adaptive, agentic UI actually looks like in practice — not in a slide deck, but running live on Chromebook, Android, and iOS simultaneously.

The same underlying GenUI logic renders context-aware interfaces across every device. The experience adapts to the user. The interface generates itself.

If you’ve been thinking about what AI means for your product’s UX, this is the live proof of concept you’ve been waiting for.

Come Find Us

Live Flutter GenUI SDK demo—adaptive, generative UI across mobile, web, and every device on April 22–24, at the Agentic Mobile & Web demo zone, Mandalay Bay Convention Center, Las Vegas.

If you’re attending Google Cloud Next ‘26, add us to your list. If you want to make sure you connect with a specific member of the VGV team, reach out ahead of time here.

Learn more about GenUI and connect with us at GCN ‘26!

We’ll see you in Vegas 🦄